Sat, 18 Feb 2006

Model-Renderer-Presenter: MVP for web apps?

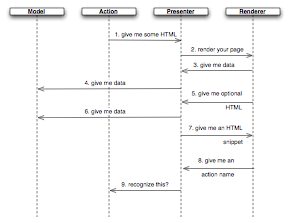

A client and I were talking over how Model-View-Presenter would work

for web applications. The sequence diagram to the right (click on it

to get a bigger version in a new window) describes a possible

interpretation. Since the part that corresponds to a View just converts

values into HTML text, I'm going to

call it the Renderer instead. The Renderer can be either a template language

(Velocity, Plone's ZPT, Sails's Viento)

or—my bias—an XML builder

like Ruby's Builder.

A client and I were talking over how Model-View-Presenter would work

for web applications. The sequence diagram to the right (click on it

to get a bigger version in a new window) describes a possible

interpretation. Since the part that corresponds to a View just converts

values into HTML text, I'm going to

call it the Renderer instead. The Renderer can be either a template language

(Velocity, Plone's ZPT, Sails's Viento)

or—my bias—an XML builder

like Ruby's Builder.

I did a little Model-Renderer-Presenter spike this week and feel

pretty happy with it. I'm wondering who else uses something like

what I explain below and what

implications it's had for

testing. Mail me if you

have pointers.

(Prior work: Mike Mason just wrote

about MVP on ASP.NET. I

understand from Adam Williams

that Rails does something

similar, albeit using mixins. So far handling

the Rails

book hasn't caused me to learn

it. I may actually have to work through it.)

Here's the communication pattern from the sequence diagram:

After the Action gets some HTTP and does whatever it does to the

Model, it creates the appropriate Presenter (there is one for each

page) and asks it for the HTML for the page.

The Presenter asks the Renderer for the HTML for the page. The Renderer is the

authority for the structure of the page and for any static content

(the title, etc.) The Presenter is the authority for any content that

depends on the state of the Model.

When the Renderer needs to "fill in a blank", it asks the Presenter. In

this case, suppose it's asking for a quantity (like a billing amount).

The Presenter gets that information from the Model.

The Renderer can also ask the Presenter to make a decision. In this case,

suppose it asks the Presenter whether it should display an edit

button. I decided that the Presenter should either give it back an

empty string or the HTML for the button. That works as follows:

First, the Presenter asks the Model for any data it needs to make the

decision. Suppose that it decides the button should be displayed. But

it's not an authority over what HTML should look like, so it...

... asks the Renderer for that button's HTML. As part of rendering that

button, the Renderer needs to fill in the name of the Action the button

should invoke. It could just fill in a constant value, but I want the

program to check—at the time the page is displayed—whether that

constant value actually corresponds to a real action. That way, any

bad links will be detected whenever the page is rendered, not when the

link is followed. Since there are unit tests that render each page,

there will be no need for slow browser or HTTP tests to find bad

links. Therefore...

... the Renderer asks the Presenter for the name to fill in.

The Presenter is the dispenser of Action names. Before giving the

name to the Renderer the Presenter asks the Action (layer) whether the

name is valid. The Action will blow up if not. (It would make as much

sense—maybe more—to have the Action be the authority over

its name but

this happened to be most convenient for the program I started the

spike with.)

What good is this? Classical Model-View-Presenter is about making the

View a thin holder of whatever controls the windowing system

provides. It does little besides route messages from the window

system to the Presenter and vice versa. That lets you mock out the

View so that Presenter tests don't have to interact with the real

controls, which are usually a pain.

There's no call for that in a web app. The Renderer doesn't interact

with a windowing framework; it just builds HTML, which is easy to work

with. However, the separation does give us four objects (Action, Model,

Renderer, and Presenter) that:

There's no call for that in a web app. The Renderer doesn't interact

with a windowing framework; it just builds HTML, which is easy to work

with. However, the separation does give us four objects (Action, Model,

Renderer, and Presenter) that:

can be created through test-driven design,

can be tested independently of each other,

and can be tested in a way that doesn't require many end-to-end tests

to give confidence that almost all of the plausible bugs have been

avoided.

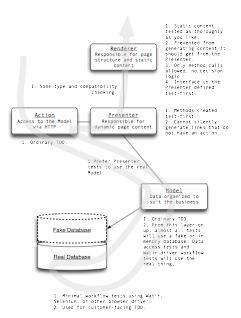

The second picture gives a hint of the kinds of checks and tests

that make sense here. (Click for the larger version. Safari users note

that sometimes the JPG renders as garbage for me. A Shift-Reload has

always fixed it.)

More later, unless I find that someone else has already described

this in detail.

## Posted at 10:31 in category /testing

[permalink]

[top]

|